Best Free AI Video Generators: Which No-Cost Option Is Best?

In 2026, video content is king. Find out which AI video generator can help you keep up in the digital age.

In 2026, video content is king. Find out which AI video generator can help you keep up in the digital age.

We dissect the ownership of the world's most popular AI chatbot, ChatGPT, and its creator OpenAI.

We've been tracking the AI errors and mistakes that have made the news over the last few years, so you don't have to.

Small startups and huge corporations alike have been consistently impacted by data breaches over the last few years.

2026 looks set to follow last year's trend for tech layoffs. We track the latest job cuts from Amazon, Salesforce and others.

The fear of AI job replacement is very real, with many companies openly admitting that the tech is eliminating jobs.

Usage still remains high, though, with 78% of US businesses using the technology on a regular basis.

Plus, AI-powered phishing campaigns represented over 80% of social engineering events in 2025.

Between tariffs and driver shortages, logistics businesses have had to make some unfortunate decisions in recent months.

Nearly half of fleet professionals aim to adopt asset tracking systems, with 48% saying they are looking to get the tech.

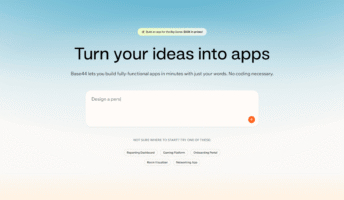

Base44 added a whole bunch of new features to its vibe coding platform after its Super Bowl ad.

Our recent data suggests that logistics professionals are more influenced by government policy than sustainability goals.

Gartner has published its cybersecurity predictions for 2026 — and companies need to tighten up their AI agent oversight.

Courses are available from big tech firms like Google and Microsoft, as well as online education platforms like Udemy.

AI coding is big right now, and LinkedIn has given employers a way to track down workers with the exact skills they need.

Report suggests that AI brings new strengths to cybersecurity and more sophisticated risks.

Only 4% of employees said that they use AI on a daily basis at work in 2023.

There's a new AI website builder in town — Wix Harmony. Here's everything you need to know about the latest Wix innovation.

This year, the landscape of cybersecurity will never be the same. Here's what to watch for, from data surges to AI malware.

Marc Benioff compared the technology to social media in regard to its negative impact on young users.

A recent survey has found that while some CEOs are seeing gain from AI, others are yet to see any financial return.